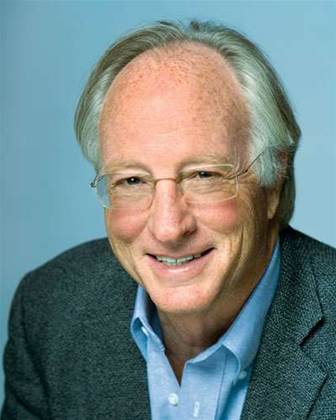

Kenneth Brill, visiting Sydney for the DC Strategics conference later this month, talks about the need for data centre resiliency with iTnews editor Brett Winterford.

iTnews: What led you to come up with the Tier system as we know it?

KB: It goes back some 18 years. We were working for the United Postal Service as consultants. Prior to 1989, UPS had been a packaged delivery company. Computing was a back-office function for them.

But that changed when they went into the overnight delivery business. Suddenly computing became critical to the life of the company.

The brand new data centre UPS had just built became obsolete overnight. The data centre was designed for back-office functions for a package delivery company. It was inadequate for an airline business.

What you have to remember is, the worst time for an airline business to be down is at night. You absolutely must have IT operational at night. There are mainframes at almost all airlines that if some of these functions weren't done, the airline is out of business.

In the case of UPS, an overnight IT outage back then could mean that two million packages were not delivered the next day. When you consider that UPS offered a ten dollar refund in the event your package did not go to its destination the next day, you are looking at every IT downtime event potentially costing them $20 million. A couple of those events and you could have afforded to build a new data centre.

Take us back to the late eighties, business worked differently before then. You could shut IT down to do maintenance. It's amazing to think that only twenty years ago or so, that's the way things were.

We were contracted as consultants. We were just doing at the time what people do when they approach a problem - we applied new thinking. What we came up with was a standard that was set for the rest of the industry.

What we worked out was a way to make power and cooling no longer be a gate for the rest of the business.

What made you decide to publish and copyright the standard?

KB: We published the first standard in 1996. One of our clients was a guy from Hewlett Packard. He wanted a simple way to explain to his management why he was asking for US$50 million to build a data centre.

This is what is so interesting about this standard. It really has been a user-driven standard. Most standards will be driven by people who have something to sell. This is a standard driven by people that want to buy something.

How often is the standard used?

KB: There are plenty of people that use and abuse this standard.

Anybody who makes a self-claim to a tier level needs to be looked at with a jaundiced eye.

Only data centres accredited are listed on the institute's web site.

We have a process of evaluation as to what tier level a data centre is. When we look at some of the data centres that make their own claims, we find that they tend to be off by at least one tier, if not two. This can be very disappointing to the owner, who spent good money but missed the mark.

Often times, they could have fixed it had they got some help in the beginning - usually it costs no more to do it the right way.

Can you give us an example of a common mistake?

KB: A Tier 3 data centre, you should be able to do maintenance on any part of the mechanical or electrical plant without IT shutting down. You should be able to draw a circle around every part of the data centre and be able to say that you can function without it. It's as simple as that. But so many data centres that call themselves Tier III would require IT to be shut down to perform maintenance on plant. This means most of the time that the plant isn't maintained. When it fails, it will be a much more catastrophic failure.

Or another - I was reviewing a data centre not too long ago. The capacity they wanted to have was 10 megawatts (MW). Because of how they arranged things - on a concurrent maintenance they could only use 6 MW of that 10 MW - that investment in 4 MW of power was lost. Now I am not sure that most customers would even have found the problem, but the people in our company look at 50 to 100 projects a year.

Why don't more data centres engage the Uptime Institute for an official rating? Is it expensive?

KB: The cost is in the fraction of a percent of the project. We don't make any money. We serve a validation function.

It is important to note that very few businesses can justify a Tier 4 standard. Tier 4 is fault tolerant - tolerant of any equipment failure. We don't even believe that most data centres have the business failure consequences to justify Tier 4. An outage would have to impact earnings for the quarter to be Tier 4.

Many people need Tier 3, however. Google is a Tier One design and that is absolutely the right business decision for them. Our own little computer room is Tier One - and that is the right decision for us.

We are agnostic to what tier level you should have. If you do justify Tier 4, you need to know that it's what you are getting. It will more than pay off the small amount of money you pay to do this.

Read on for more...

iTnews Executive Retreat - Data & AI Edition

iTnews Executive Retreat - Data & AI Edition

.png&w=120&c=1&s=0) iTnews Cloud Covered Breakfast Summit

iTnews Cloud Covered Breakfast Summit

iTnews State of Security Breakfast

iTnews State of Security Breakfast

The 2026 iAwards

The 2026 iAwards

.jpg&w=120&c=1&s=0) Integrate 2026

Integrate 2026

_(1).jpg&h=140&w=231&c=1&s=0)