The United States Government Accountability Office (GAO) has found major deficiencies in the failover plans for three classified supercomputing facilities used for testing nuclear weapons capabilities.

The classified supercomputers, operated by the US National Nuclear Security Administration (NNSA) at three sites in New Mexico and California, are also used to test the effect of changes to its nuclear weapons stockpile.

Some of the facilities are managed by nuclear and weapons heavyweights Babcock and Wilcox Company and Lockheed Martin Corporation.

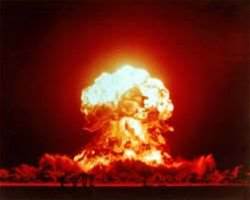

Although the supercomputers were not considered "mission critical" by the NNSA, the GAO reported that the machines were still critical to wider national security efforts since they are a "computational surrogate" to actual underground testing facilities.

The intelligence provided by the facilities inform the nation's overall security strategy by tracking modernisation needs and providing an accurate estimate of its weapons stockpile.

The supercomputing facilities were part of the NNSA's US$1.7 billion two year project commenced in 2007, Advanced Simulation and Computing, which currently boasts12 supercomputers connected by a high-grade secure network.

Amongst its arsenal are two Roadrunners, including a 24,000 processor Roadrunner Phase-3 capable of 1,280 TerFLOPs (FLoating point Operations Per Second), a 131,072 processor IBM BlueGene/L capable of 367 TeraFLOPs, and a 147,456 processor Dawn supercomputer capable of 501.4 TeraFlops.

At one of the three facilities, according to the GAO, none of the plans for disaster recovery had been tested, leaving staff with little clue about what would happen and what to do if it suffered a major outage.

"Due to lack of planning and analysis by NNSA and the laboratories, the impact of a system outage is unclear," the GAO found.

While the computers could deploy cross-facility capacity sharing in the event of a failure, the GAO found that the department didn't know the minimum supercomputing capacity needed to meet program requirements.

"Without having an understanding of capacity needs and subsequent testing, the laboratories have little assurance that they could effectively share capacity if needed," the GAO report said.

.jpg&h=140&w=231&c=1&s=0)

iTnews State of Security Breakfast

iTnews State of Security Breakfast

iTnews State of Data & AI Breakfast

iTnews State of Data & AI Breakfast

The 2026 iAwards

The 2026 iAwards

.jpg&w=120&c=1&s=0) Integrate 2026

Integrate 2026

Security Exhibition & Conference

Security Exhibition & Conference

_(1).jpg&h=140&w=231&c=1&s=0)